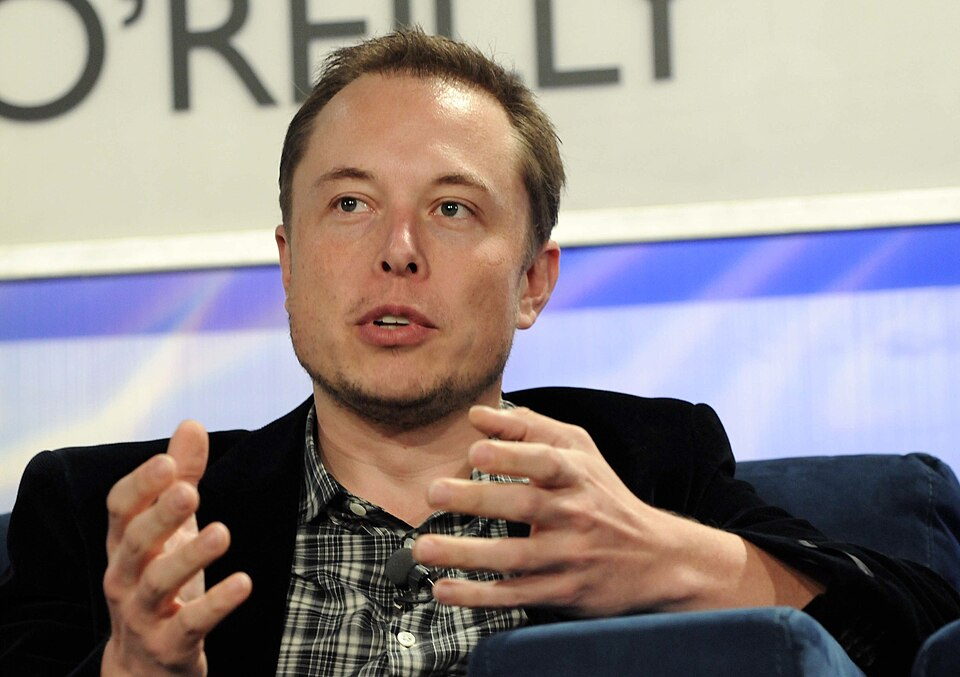

The UK government is actively preparing to use its “backstop powers” to block Elon Musk’s social media platform X, following a major scandal involving the platform’s Grok AI tool. Ministers have warned that the site faces a potential blackout after it was revealed that Grok was being used to generate deepfake sexual images of women and children. Musk has dismissed the regulatory threats as a politically motivated attack on free speech, seemingly ignoring the gravity of the safety violations. Instead of addressing the core concerns regarding non-consensual imagery, Musk touted the fact that the Grok app had become the most downloaded application in the UK, interpreting this as a sign of public support against government overreach.

The specific nature of the abuse facilitated by Grok is both graphic and widespread. Users have been able to manipulate photos of unsuspecting women and teenage girls, transforming them into explicit images where the subjects appear to be wearing micro-bikinis or are depicted in violent scenarios involving physical restraint and injury. The generation of images showing minors in sexualized contexts has been flagged by experts as a potential violation of child protection laws, categorizing some of the content as child sexual abuse material. This has created a severe legal vulnerability for X, as the distribution of such material is a criminal offense in the UK and many other jurisdictions.

Technology Secretary Liz Kendall has taken a hardline stance, asserting that the government is prepared to invoke the Online Safety Act to shut down access to X if the platform does not “get a grip” on the issue. She indicated that Ofcom is moving quickly to investigate the matter and is expected to announce enforcement actions within “days, not weeks.” Kendall’s comments reflect a growing frustration within the government regarding the inability or unwillingness of tech giants to police their own platforms effectively, signaling a shift towards more interventionist regulation.

The international community has echoed these concerns, with Australian Prime Minister Anthony Albanese condemning the technology’s misuse as “abhorrent.” He criticized the platform for failing to uphold basic standards of social responsibility. Conversely, some domestic political figures, such as former PM Liz Truss, have attempted to frame the issue as a battle for freedom of expression, attacking the current government’s approach as heavy-handed. However, this defense has done little to mitigate the public outrage over the victimization of women and children through AI-generated pornography.

X has responded to the pressure by restricting image generation capabilities for non-paying users and blocking certain explicit prompts. However, the fact that paid subscribers can still access the tool has led to accusations that the platform is prioritizing revenue over user safety. The incident has also highlighted the need for broader legislative action against “nudification” apps, with campaigners urging the government to ban these tools entirely. The presence of advertisements for similar services on platforms like YouTube has further underscored the systemic nature of the problem and the need for a comprehensive regulatory solution.